In our previous two posts we describe how to create an association Data Quality Management Plan and how to perform a Data Quality Assessment. In this last post in the three-part series we explain how to create a Common Language Dictionary and Data Quality Scorecard, as well as how to tackle data cleansing.

What is a Common Language Dictionary?

Have you ever been in the situation where two different staff members are trying to determine a number (such as the number of new members) and they each arrive at a different result? Sometime this is due to staff pulling the data differently or even incorrectly, but often it is because they are operating with two different definitions for the same word. What is the definition of a “new member” in your organization? What about the definition for retention? One of the first things we do with our clients is create a common language dictionary (CLD) so that there is clear and consistent communication internally with staff and externally with board and committee members about the meaning of the metrics. Standardization and clarification also enables transparency, accountability and consensus.

As a group you need to reach consensus on definitions for common metrics. Common terms to define for association include:

- Prospect

- New member

- Active member

- Membership duration

- Renewed member

- Rejoined member

- Membership grace period

- Lapsed member

- First time registrant

- Full registrant

How do I Create a Data Quality Scorecard?

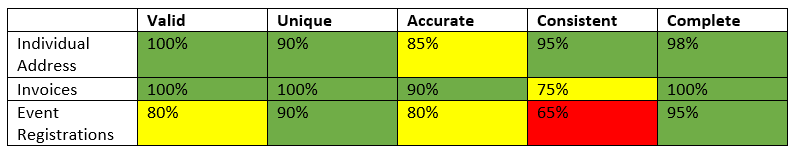

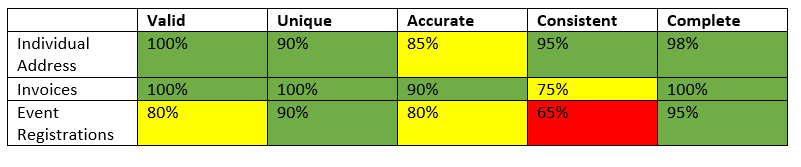

How do you know when you win if you don’t know where the finish line is? A data quality score assigns a value to the quality target for each of your data elements using the dimensions of validity, accuracy, consistency, completeness, and uniqueness. Start with a list of your important data elements and determine the importance of that data element to your association. This becomes the target that you will use to measure progress. Does all of your data need to be 100% unique? Is it ok if you have some duplicates? It might be ok to have a duplicate prospect record, but not a duplicate invoice! After you determine the target, then take an honest look at your data and rate your current quality on each of these same dimensions. Do this throughout your initial data cleansing project and beyond. We have found it to be true that “what gets measured, gets done”!

Sample Scorecard

Data Cleansing

Using the results from the data quality assessment you will now attack the data quality issues you discovered. There will be two types of data cleansing: manual and automated.

Manual Cleansing

- Manual cleansing is necessary when a judgment call must be made in order to correct the data.

- Often when updating inaccurate data you will need to perform a manual cleansing.

Automated Cleansing

- If the data cleansing process strictly follows your data rules then consider automated cleansing.

- Performing automated cleansing:

- Create data quality scripts that are run either on a one-time or recurring basis to address data quality issues.

- Use SQL 2012 Data Quality Services (DQS) to perform data cleansing.

Process Improvement

Using the results from the root cause analysis and stakeholder interviews discussed in previous posts, identify proactive processes that will prevent future data issues.

- Develop Standard Operating Procedures (SOPs). Documented SOPs combined with staff training will prevent many data issues. By providing a universal standard throughout the organization you keep data consistent and valid moving forward.

- Assign the role of “data steward” to a person who is responsible for supervision of data quality to ensure that data remains an asset for your association.

- We suggest spending 1-2 hours per week per department on data cleansing once the initial data cleansing project is complete.

- Create exception reports that identify ongoing data issues. It is very common and it should be expected that you will continue to have a number of ongoing data quality issues. This frequently happens whenever customers (members, prospects, exhibitors, etc.) are able to interact with your data online and whenever multiple systems are integrated. Exception reports identify those situations and queue them for corrective action.

- Use programming to create input controls where possible to restrict the number of errors that can be created by web users.

- Contract with a 3rd party company that regularly compiles company information and verify the accuracy of your data against theirs. Several reputable firms do this, such as Dun & Bradstreet, LexisNexis®, and Bureau Van Dijk.

For more information on Data Quality see the first two blogs in this series about creating an association data quality management plan and performing a data quality assessment. The most important thing is to begin now to clean your data and ensure it is fully leveraged as an association asset for making decisions.